Understand Precision and Recall once for all

(Kisan Thapa)

Precision and Recall are two fundamental metrics in machine learning classification tasks, yet they often confuse beginners. Instead of memorizing definitions, let's understand them intuitively using a simple analogy: Fishing in a Pond.

The Fishing Analogy

Imagine you are a fisherman with a net (your Model), and you are fishing in a pond. The pond contains two types of creatures: Fish (which you want to catch) and Frogs (which you don't want).

- Fish represents the Positive Class (what we are looking for).

- Frog represents the Negative Class (what we want to ignore).

- The Net represents our Classifier/Model.

Let's assume the pond has 100 Fish and 10 Frogs. You cast your net once.

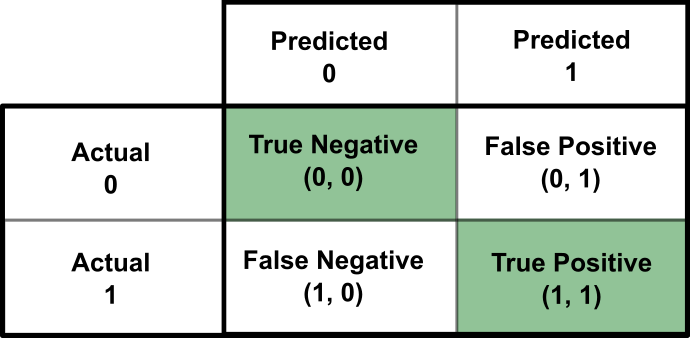

Understanding the Confusion Matrix

When you pull up your net, four things can happen for each creature involved:

- True Positive (TP): You wanted to catch a fish, and you caught a fish. (Success!)

- False Positive (FP): You wanted to catch a fish (implied by using the net), but you caught a frog. This is a "False Alarm" or Type I Error. You predicted positive, but it was negative.

- True Negative (TN): You ignored a frog, and it was indeed a frog. (Success! The net didn't pick up the trash).

- False Negative (FN): You missed a fish that was swimming right there. This is a "Miss" or Type II Error. You predicted negative (by not catching it), but it was positive.

Precision: Quality of the Catch

Precision asks the question: "Out of all the things I caught in my net, how many were actually fish?"

It measures the purity of your positive predictions. If you have high precision, it means when you predict something is a fish, you are usually right.

In our analogy, if you caught 10 creatures, and 8 were fish and 2 were frogs, your precision is $8/10 = 0.8$ or 80%.

Recall: Quantity of the Catch

Recall (also called Sensitivity) asks the question: "Out of all the fish available in the pond, how many did I manage to catch?"

It measures the completeness of your positive predictions. High recall means you didn't miss many fish.

If there were 100 fish in the pond, and you caught 80 of them (regardless of how many frogs you also caught), your recall is $80/100 = 0.8$ or 80%.

The Precision-Recall Trade-off

Ideally, we want both high precision and high recall, but in practice, they often trade off against each other. Think about adjusting the size of your net's mesh or your strategy:

Scenario A: The "Strict" Net (High Precision, Low Recall)

You decide you absolutely hate touching frogs. You make your net very selective (maybe you only catch things that look 100% like a perfect fish).

- Result: You will catch very few frogs (Low FP $\rightarrow$ High Precision).

- Trade-off: You will likely miss many odd-looking or fast-swimming fish (High FN $\rightarrow$ Low Recall).

Scenario B: The "Lenient" Net (Low Precision, High Recall)

You decide you must catch every single fish to feed your village. You use a massive net and scoop up everything.

- Result: You catch almost every fish (Low FN $\rightarrow$ High Recall).

- Trade-off: You will also catch a lot of frogs and trash (High FP $\rightarrow$ Low Precision).

Conclusion

There is no "perfect" balance that applies to every problem. The right balance depends on your specific goal:

- Spam Detection: We prefer High Precision. We don't want to accidentally label a real email as spam (False Positive). It's okay to miss a few spam emails (False Negative).

- Cancer Diagnosis: We prefer High Recall. We cannot afford to miss a sick patient (False Negative). It's better to flag a healthy person for further testing (False Positive) than to let a sick person go home.